AI in QA - The Fear Around AI and Jobs

“We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.” - Roy Amara

Every time a new technology starts gaining traction, the same question shows up almost immediately: What happens to human jobs now? And AI is no exception. The moment it began showing it could write, summarize, analyze, generate, and automate at speed, the conversation quickly shifted from capability to consequence.

For many professionals, the question quickly became: If AI can do so much, where does that leave human work? In QA and software testing, that question becomes even more prominent. As testing already operates under constant pressure for speed, precision, scale, and repeatability, it is easy to see why many assume AI would target this space first.

But evidence suggests otherwise. According to a study by researchers from Stanford and MIT, AI assistance increased productivity by around 14-15% on average. For less experienced and lower-skilled workers, the gains went up to 30%.

What this shows is that AI is not here to replace testers. It is helping them do more with the time and expertise they already have.

The Reality Check: Most AI Projects Still Struggle to Deliver ROI

AI may be improving how work gets done, but achieving ROI is still a challenge. A recent MIT study found that 95% of generative AI pilots fail to deliver measurable ROI for companies, not because the technology is flawed, but because many organizations still struggle with integration and alignment.

There are a few recurring reasons behind this:

Poor Integration With Business Workflows

In many organizations, AI is introduced as an add-on instead of being built into actual workflows. When workflows were never designed to support AI, the impact remains limited. As highlighted in TechRadar, only 5% of organizations reported achieving sustained value from AI when it was not integrated into their core workflows. AI might work well in isolation, but if it does not fit naturally into your day-to-day workflow, its full value is rarely realized.

Unclear Use Cases

Many AI initiatives begin without a clearly defined business problem. They start as experiments, often with broad expectations, but will have no measurable outcome. According to Gartner, over 40% of agentic AI projects are expected to be cancelled by the end of 2027 , with unclear business value being a key reason. When your use case is vague, ROI becomes very difficult to prove, even if the technology itself is functioning well.

Adoption Driven By Hype Rather Than Value

Some organizations invest in AI simply to keep up with market trends rather than solving actual business needs. This often leads teams to focus on visible or trendy applications instead of useful ones. McKinsey states that nearly two-thirds of organizations have still not begun scaling AI across the enterprise , and only 39% reported a measurable impact on business performance. Impressive demos do not always translate into operational or financial value.

Evidence That AI Increases Productivity

By now, you don’t need to treat AI productivity as a hypothetical. There is enough evidence that AI can improve output in measurable ways, especially when it is used to support people in the work they already do.

Interestingly, the biggest gains do not always go to the most experienced professionals. In many cases, AI helps newer or less experienced workers ramp faster, reduce friction, and perform more consistently. What often slows people down is not lack of ability, but lack of guidance, context, or time.

The Stanford HAI 2025 AI Index supports this by highlighting that AI is increasingly being linked to productivity gains and narrower skill gaps across the workforce. And this is where AI fits best, as a productivity multiplier and not a replacement for human judgment.

Where AI Is Increasing Productivity in QA

At this stage, QA is already under high pressure due to faster releases, growing test scope, and constant changes across applications. You not only have to test more, but also make sure the effort doesn’t keep increasing every cycle.

This is where AI comes into the picture. You can see it in areas where teams usually lose the most time, such as creating tests, maintaining automation, reviewing failures, and running regressions faster. One of the first places where this becomes evident is in test case creation.

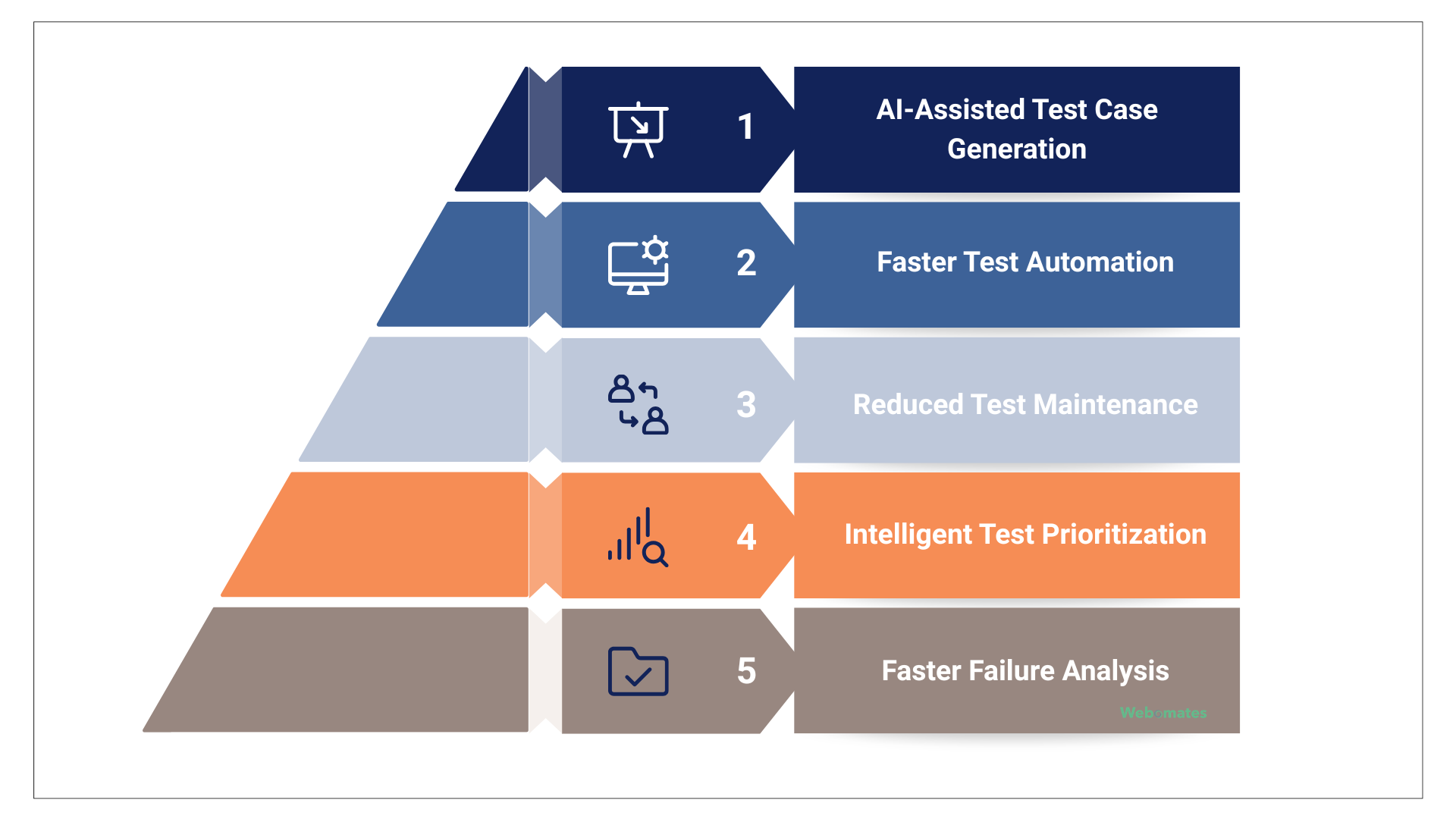

AI-Assisted Test Case Generation

Writing test cases manually takes time and often depends on how you interpret the requirements. As features evolve, keeping coverage updated becomes harder.

This is where AI becomes quite useful. It helps by generating test scenarios directly from requirements, reducing manual effort and improving coverage as applications grow.

Webo.ai has already demonstrated this in actual testing environments.

- A full automation setup was completed in 6 weeks, starting with 325 automated test cases and later scaling to 746.

- 563 test cases were created within 3 months for integration testing.

- In one instance, the internal team spent only 9 hours reviewing and approving generated test cases instead of creating them manually.

This allows teams to scale test creation faster and handle growing demand more efficiently.

Faster Test Automation

Building automation usually takes time, scripting effort, and ongoing maintenance. AI helps you by generating scripts from natural language or user flows.

With Webo.ai:

- Full test automation was completed in just 2 weeks, with 386 complex test cases prepared for critical business scenarios.

- Parallel automation runs were enabled for multiple report versions

For day-to-day validation,

- Smoke tests delivered results within 15 minutes to 1 hour

- Overnight regression runs were completed with results available by the next business morning.

This helps teams automate faster, test more frequently, and support rapid release cycles.

Reduced Test Maintenance

Maintaining automation is often one of the biggest hidden costs. Self-healing scripts and smart locator updates reduce the time you spend fixing broken tests. With Webo.ai,

- Its AiHealing® capability was used to fix automation failures caused by locator changes, timeouts, and feature updates that would normally require manual rework.

- In one implementation, 1,289 test cases were AiHealed, while in another, 383 test cases were AiHealed.

- 581 additional fixes were completed in a separate integration project.

- Also, issues that often took weeks to repair were reduced to less than a day.

This keeps automation reliable even as applications constantly change.

Intelligent Test Prioritization

AI analyzes risk areas, code changes, and historical defects and helps you decide what needs to be tested first. Webo.ai,

- Categorizes findings by business priority, which helps teams focus first on the issues that need immediate attention.

- 175 valid defects were identified from 238 logged issues in one project.

- 95 valid defects were confirmed in another, including 13 Priority 1 and 44 Priority 2 issues.

- 58 valid defects were reported in a separate project, including 10 Priority 1 and 35 Priority 2 findings.

This reduces wasted effort and helps teams prioritize what matters most.

Faster Failure Analysis

AI analyzes test results and error logs to understand why a failure happened. This reduces the time you spend identifying the root cause of failures. Webo.ai has made this easier through detailed defect analysis.

- Its reporting includes defect summaries, replication steps, mapped test cases, and supporting notes, which give teams a clear direction on what failed and where to look first.

- It also provides video evidence for failed scenarios, which helps teams review issues faster without repeating the same tests manually.

- Detailed triage reporting makes it easier to separate valid defects, false failures, and lower-priority issues

This helps teams move faster from investigation to resolution.

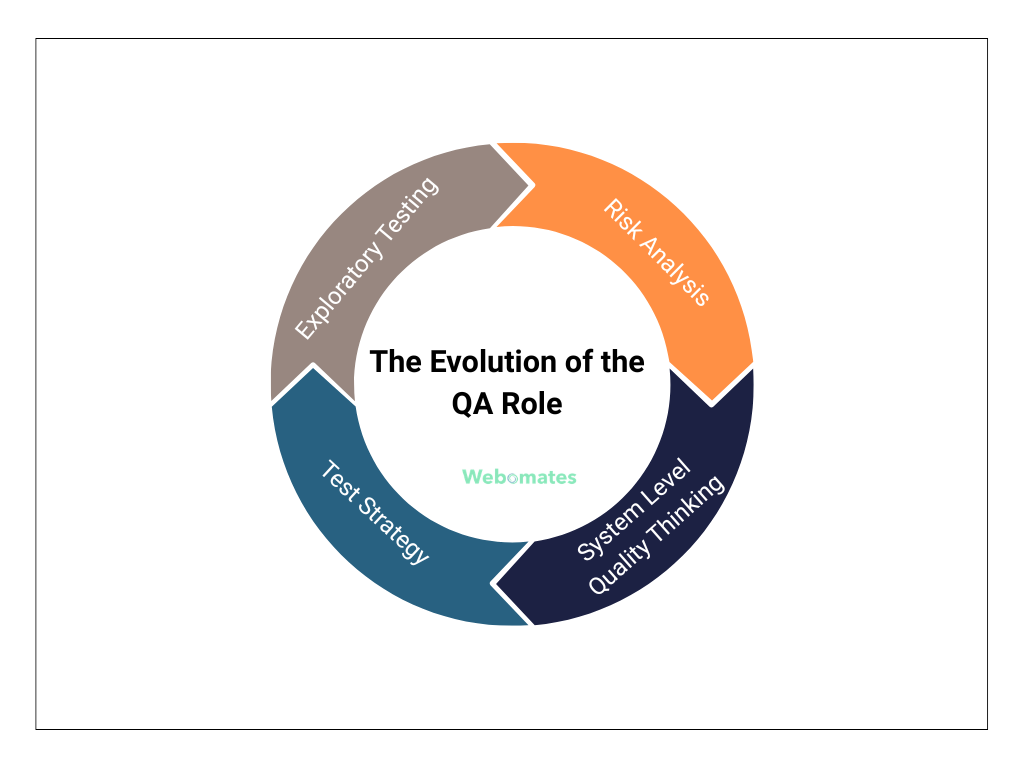

The Evolution of the QA Role

Test execution is no longer the main focus. As more of it gets automated, you can find the role moving toward areas that need judgment and context. And exploratory testing is one of the areas where you can see this happening already.

Exploratory Testing

Exploratory testing is becoming more valuable as automation takes over predictable and repeatable checks. Instead of following predefined scripts, testers explore the product as they use it to uncover edge cases, unexpected behavior, and usability issues that automation may miss. This requires deeper product understanding and a stronger user perspective.

Risk Analysis

QA is also becoming more focused on risk analysis. This means identifying the areas most likely to fail before testing begins by looking at code changes, past defects, and system dependencies. When time is limited, this helps teams focus effort where it matters most instead of trying to test everything equally.

Test Strategy

The role now goes beyond writing individual test cases. It increasingly involves deciding the overall testing approach, including what should be automated, what still needs manual validation, and which test levels are most important. The focus shifts toward coverage, efficiency, and alignment with business priorities.

System Level Quality Thinking

Many applications today require QA to look beyond individual features and evaluate how the product behaves as a whole. This includes service interactions, performance under load, and reliability across environments. In distributed systems, failures often happen between connected components rather than within a single feature.

All of this means the repetitive, data-heavy work is now handled by AI, so testing is no longer just about execution.

The Future - Humans Designing, AI Executing

The future is not about AI replacing QA or humans. It is about a clear split in responsibility, where machines take care of repetitive work and fast execution, while people focus on judgment and direction.

Humans Define What Matters

As execution becomes more automated, the human role becomes more strategic. Instead of spending hours writing every regression scenario, you can decide which business flows, customer journeys, or integrations carry the highest release risk.

When a revenue-critical checkout flow changes, the question is not whether every test should run, but whether payment risk, pricing accuracy, or refund failures need deeper attention. According to Capgemini’s World Quality Report, communication, judgment, and collaboration are key skills for quality teams, showing human decision-making remains central.

AI Takes Care of Execution

Once your priorities are clear, AI can take over much of the time-consuming execution work.

When a pricing update goes live, AI can identify affected scenarios, regenerate relevant tests, run regression checks overnight, and flag failures before teams start work. This shortens validation cycles and allows releases to move faster without adding more manual effort.

McKinsey states that high-performing software teams using AI reported 16% to 30% improvements in productivity, customer experience, and time to market.

Interpretation Still Stays With Humans

When multiple tests fail after a release, the challenge is in understanding what those failures mean. AI can group similar logs, identify patterns, and highlight likely causes. But the decision still rests with you.

You have to determine whether the failures point to one underlying issue, a production blocker, or something minor that can wait. A login issue, payment failure, or broken reporting flow does not carry the same urgency as a minor UI defect. According to TechRadar, AI-generated testing outputs still require human review to catch blind spots, missed scenarios, and poor decisions.

QA Becomes More About Decisions Than Execution

The role of QA has changed from only running tests to helping teams make better release decisions. This includes deciding whether a feature is launch-ready, whether testing is enough for an urgent fix, or whether performance risk is acceptable before a major campaign.

Capgemini’s World Quality Report highlights that Generative AI was ranked the top skill for quality engineers (63%), closely followed by core quality engineering skills (60%). This shows the future of QA depends on combining strong judgment with technical expertise.

Conclusion

AI is not replacing QA, but it’s changing how you spend your time. It takes care of repetitive work, while you decide what matters and what doesn’t. You don’t have to spend time writing and fixing the same tests again and again. Instead, you can look at the product more closely, thinking about risks, and deciding what actually needs attention.

Webo.ai turns requirements into tests, runs them, and keeps them updated, so you’re not dealing with all of that manually.

Worried AI might replace QA — or help your team test smarter and release faster?

Webo.ai removes repetitive testing with AI-powered test creation, automation, and self-healing — so QA teams focus on risk, strategy, and quality decisions.

Start Free TrialFAQs

Will AI replace QA engineers?

No. AI is reducing the time spent on repetitive tasks such as test creation, execution, and maintenance, but judgment-driven work still needs people. Areas like release risk, exploratory testing, and business impact decisions remain human-led.

How should leaders measure ROI from AI in QA?

The best indicators are reduction in testing effort, faster release validation, fewer escaped defects, lower maintenance overhead, and better use of engineering time. ROI is stronger when AI is tied to workflow outcomes, not isolated experiments.

How does AI help with faster releases?

AI shortens validation cycles by generating tests faster, running regressions more frequently, and highlighting failures quickly. This gives teams feedback sooner and helps releases move with more confidence.

How does Webo.ai help QA teams use AI without replacing testers?

Webo.ai is designed to remove repetitive testing work, not human expertise. It helps teams generate test cases, automate execution, self-heal broken scripts, and speed up regression cycles, while testers stay focused on exploratory testing, release decisions, risk analysis, and overall quality strategy.