Published on January 12, 2026

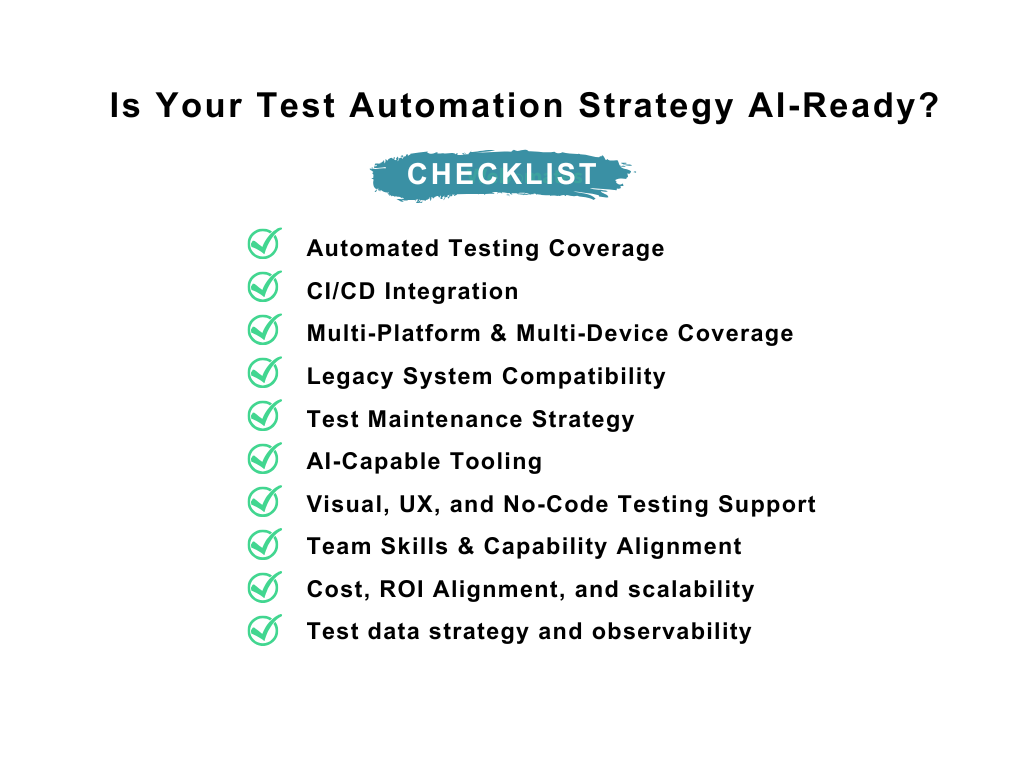

Is Your Test Automation Strategy AI-Ready? A 10-Point Checklist for QA Managers in 2026

By mid-2026, test automation will move from a supporting function to a core driver of release predictability, delivery confidence, and engineering efficiency. As release cycles accelerate and reliability expectations rise, AI-driven testing is shifting from experimentation to necessity.

AI-assisted test creation, self-healing automation, and predictive quality signals are advancing quickly, but AI delivers value only when built on strong automation foundations. For organizations operating with limited QA bandwidth and fast delivery pressure, brittle automation turns AI into a risk amplifier rather than an advantage.

To determine whether automation strategies are prepared for this shift, it is necessary to look beyond tools and focus on fundamentals. The following 10-point checklist highlights the structural, technical, and organizational indicators that define AI-ready test automation in 2026, without increasing delivery risk or operational noise.

1) Automated Testing Coverage

AI-ready automation begins with coverage that reflects real business risk. A strong foundation includes stable regression tests, smoke tests, API coverage, and validation of critical user flows. Coverage that focuses on low-impact or rarely used scenarios adds maintenance overhead without meaningful protection.

Why this matters for AI readiness:

AI-driven testing tools learn from patterns in execution. When automation consistently validates high-value, repeatable workflows, AI can prioritize risk, optimize coverage, and surface insights that align with business impact rather than test volume.

2) CI/CD Integration

Automation that runs outside the delivery pipeline is disconnected from decision-making. For AI to add value, tests must execute continuously, on every meaningful code change, with fast and reliable feedback loops.

Why this matters for AI readiness:

AI-driven testing tools learn from patterns in execution. When automation consistently validates high-value, repeatable workflows, AI can prioritize risk, optimize coverage, and surface insights that align with business impact rather than test volume.

3) Multi-Platform and Multi-Device Coverage

Modern products rarely live on a single surface. Web, mobile, APIs, cloud services, and third-party integrations all contribute to the end-user experience. Automation must reflect this reality.

Why this matters for AI readiness:

AI models derive insight from breadth. Cross-platform execution data allows AI to detect systemic issues, identify cascading failures, and recognize patterns that isolated test environments cannot reveal.

4) Legacy System Compatibility

Most teams operate with a mix of modern and legacy components. Some areas remain difficult or impractical to automate due to architectural constraints or proprietary interfaces.

Why this matters for AI readiness:

AI cannot reason about systems it cannot observe. Clear visibility into what can and cannot be automated prevents false expectations and ensures AI efforts focus where execution data is available and actionable.

5) Test Maintenance Strategy

Unstable locators, flaky tests, and unclear ownership signal automation debt. Without deliberate maintenance practices, test suites become noisy and unreliable.

Why this matters for AI readiness:

AI-powered self-healing and optimization depend on clean baselines. Poorly maintained automation reduces the accuracy of AI-driven insights and limits its ability to adapt intelligently to change.

6) AI-Capable Tooling

Not all automation tools are designed to support AI-native workflows. Capabilities such as self-healing locators, AI-assisted test generation, anomaly detection, and predictive analysis distinguish genuine AI readiness from surface-level adoption.

Why this matters for AI readiness:

Without tooling built to capture and interpret rich execution data, AI cannot reduce effort, improve stability, or deliver meaningful intelligence.

7) Visual, UX, and No-Code Testing Support

Traditional automation excels at validating functionality but often struggles with visual regressions and UX inconsistencies. As interfaces evolve rapidly, this gap becomes more pronounced.

Why this matters for AI readiness:

AI is particularly effective at detecting visual anomalies and behavioral deviations, but only when automation captures UX-level signals. Visual and no-code testing expand the data AI can analyze and act upon.

8) Team Skills and Capability Alignment

AI changes how testing is performed, not whether expertise is required. Automation fundamentals, framework literacy, and the ability to interpret AI-driven insights remain essential.

Why this matters for AI readiness:

AI amplifies existing capability. Skill gaps in automation design or analysis limit adoption and reduce the value intelligence can deliver.

9) Cost, ROI, and Scalability Discipline

Automation carries real cost in tooling, maintenance, and training. AI magnifies returns only when automation already delivers measurable value.

Why this matters for AI readiness:

Scalable, ROI-aligned automation produces the volume and diversity of data AI needs to optimize execution, detect trends, and predict delivery risk without increasing operational overhead.

10) Test Data Strategy and Observability

AI-ready automation depends on realistic test data and clear visibility into execution outcomes. Automation that relies on static or non-representative data limits the accuracy of both test results and AI-driven insights.

Why this matters for AI readiness:

AI models learn from execution signals. High-quality test data and observable outcomes enable AI to detect patterns, prioritize risk, and support confident release decisions rather than isolated pass/fail results.

Implications for Product-led engineering teams

AI does not resolve weak automation strategies; it exposes them. Teams with fragmented, brittle, or siloed automation will see AI accelerate instability. Teams with disciplined, CI-aligned, and risk-focused automation will see AI increase confidence, coverage, and velocity.

AI readiness is not a tooling milestone. It is a leadership decision about predictability under pressure.

Conclusion

AI-ready test automation is not about adopting the newest technology. It is about building a delivery system that remains reliable as speed and complexity increase. Organizations that invest in strong automation foundations today position themselves to release faster, recover quicker, and scale with confidence tomorrow.

Those that do not will find that AI delivers exactly what their systems allow, nothing more.

“AI rewards strong automation foundations.”

Webo.AI enables teams to modernize test automation with self-healing, AI-generated coverage, and execution intelligence, helping organizations ship faster with fewer production surprises.

Put the 10-point checklist into practice. Start a free trial with Webo.AI and get self-healing tests, coverage intelligence, and execution reliability—so you can ship faster with confidence.

Ready to Make Your Test Automation AI-Ready?

Use the checklist in practice.

Webo.AI delivers self-healing tests, AI-generated coverage, and execution intelligence so QA managers can ship faster with fewer production surprises.

FAQs

1. Does AI readiness require replacing existing automation frameworks?

No. Most organizations do not need to replace their existing automation frameworks to become AI-ready. In many cases, readiness depends less on the tools in use and more on how automation is structured and maintained.

Frameworks that already support stable execution, meaningful coverage of critical workflows, and consistent integration with CI pipelines can produce the data AI systems rely on. Gaps typically arise from issues such as brittle tests, fragmented coverage, limited observability, or inconsistent execution across environments. When teams address these foundational areas, they often make greater progress toward AI readiness than by replacing the framework itself.

2. Is AI-driven test generation safe for complex or regulated systems?

AI-driven test generation can be useful when paired with human review. It works best for speeding up coverage discovery and identifying scenarios that teams might otherwise miss, not for removing validation responsibility.

In complex or regulated environments, tests still need clear ownership, review, and traceability. AI can help teams move faster, but accountability for what gets tested and released must remain with people.

3. How should teams decide which tests matter most for AI learning?

Test that covers what users depend on day to day matters most. This includes critical user journeys, revenue-impacting flows, and behavior that the system must get right on every release.

Tests focused on rarely used paths or low-risk scenarios are less useful for AI learning. While they may have value in specific situations, they often add noise and do little to improve confidence or decision-making at release time.

4. Can smaller QA teams benefit from AI-driven testing?

Yes. Smaller teams benefit when automation is focused and well-maintained. AI can reduce day-to-day maintenance, bring attention to risk sooner, and make it easier to decide where testing effort is best spent, without increasing headcount.