Published on January 27, 2026

The QA Leader's Dilemma in 2026: Should We Build Our Own AI Test Tools or Buy Off-the-Shelf?

For many engineering leaders, testing has stopped feeling like a clear win.

Coverage and automation had both increased, with AI in software testing now part of everyday workflows. And yet, release confidence did not improve in day-to-day delivery decisions.

Tests that had passed one sprint failed in the next without an obvious cause. Automation suites continued to grow, but demanded constant attention. Over time, senior engineers began showing up repeatedly in test failure triage, release reviews, and post-mortems.

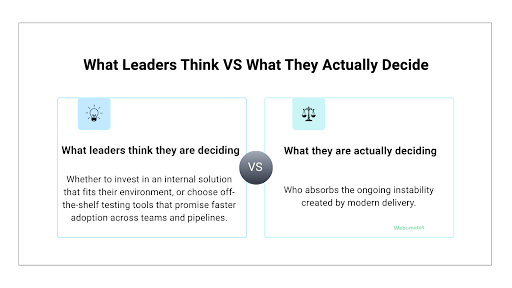

When this pattern repeats, the conversation turns to tooling. Should teams build stronger internal capability, or adopt off-the-shelf testing tools to scale AI test automation?

But this is not really a tooling problem. It is a question of who owns ongoing instability when change is constant. And this instability does not disappear. It just settles into day-to-day work and gradually consumes more senior engineering time.

That is the dilemma shaping QA and engineering strategy in 2026, whether it is named explicitly or not.

Why Build vs Buy No Longer Holds in 2026

Traditional build vs buy decisions were made in a very different environment. Systems were more stable, maintenance costs were easier to predict, and test automation assets were expected to last.

What changed

In 2026, those assumptions no longer apply. Product interfaces now change continuously, and workflows are refactored mid-cycle. While AI-assisted development accelerates delivery, it also increases volatility across the test layer.

As a result, the cost center in testing has shifted. The primary effort is no longer in writing tests, but in keeping them reliable as everything around them changes.

Why the old logic breaks

This breaks the old build vs buy QA logic because decisions were designed for stability, not constant change. When reliability becomes a moving target, comparing internal capability against external features no longer captures the real tradeoff leaders are making.

Instead, the decision shifts toward a different issue altogether: where ongoing instability is absorbed and how much organizational effort it consumes over time.

Internal tools may look efficient early, while off-the-shelf testing tools reduce upfront effort. In both cases, the real impact becomes visible over time, not during initial rollout.

That’s why, when leaders focus primarily on features or frameworks, they are often solving the wrong problem. What matters more is where maintenance effort accumulates and how much senior engineering attention is required to sustain confidence as change becomes constant.

That is why build versus buy is no longer just a tooling choice, but a decision about ownership and risk.

The New Cost Center No One Planned For: Sustained Test Reliability

The hidden cost of AI-driven testing is not in creating tests faster, but in the effort it takes to keep those tests trustworthy over time.

A minor UI update here, a reworked flow there, or a behavior change driven by AI elsewhere all might look manageable. But together, they create continuous maintenance pressure. Over time, this shows up in familiar ways:

- Maintenance drag becomes delivery drag: Engineering capacity quietly shifts from planned work to unplanned stabilization.

- Delivery drag becomes missed release windows: Teams hesitate to ship because test results no longer inspire confidence.

- Missed windows quietly erode revenue expectations: Commitments slip, roadmaps adjust defensively, and trust erodes with both customers and internal stakeholders.

These costs rarely show up cleanly on a roadmap or budget line. They surface in slower cycles, repeated escalations, and senior engineers being pulled into defending test stability instead of building product.

For many leaders, this is the uncomfortable realization. Test reliability, once a one-time investment tied to automation rollout, has become a standing operational expense that grows alongside change.

The Real Cost of Building AI Test Tools In-House

Internal AI test tooling often delivers impressive early prototypes. The risk emerges later, not as outright failure, but as persistent friction that consumes attention.

The cost shows up in specific ways:

- Senior engineering time diverted: Time that should be spent on product direction, architecture, or delivery decisions is redirected toward keeping internal tools stable.

- Ongoing operational overhead: Model tuning, infrastructure upkeep, security reviews, and compliance readiness become recurring responsibilities rather than one-time efforts.

- Concentrated ownership risk: Critical knowledge narrows down to a small group, which increases continuity risk if there is a role change or key individuals leave.

Together, these patterns shift reliability from systems to people. And if test reliability depends on named individuals, the system is already fragile.

When Building Does Make Sense — And Why Most Teams Don’t Qualify

There are cases where building tools internally is justified. Most teams believe they qualify, but very few meet the constraints that actually justify it. The difference lies in limits imposed by the environment, not by choice.

- Regulatory constraints: In highly regulated environments, auditability or data residency requirements can restrict the use of external platforms. In these cases, building an internal solution may be the only viable option, regardless of capability.

- Proprietary workflows: When testing logic is tightly bound to unique internal systems or domain-specific behavior, abstraction becomes difficult. Off-the-shelf solutions may be difficult to adapt without proper customization.

- Product-level differentiation: In a few platform companies, customers directly use testing features as part of the product. Here, building internally supports the product, not just internal delivery.

Often, teams choose to build tools internally, not because they are truly different, but because they are uncomfortable relying on external tools.

What Buying Actually Means in 2026

Buying AI test tools in 2026 does not revolve around acquiring more features alone. It is also about deciding where volatility should live.

As AI test automation becomes part of everyday delivery, mature vendors increasingly absorb sources of change that would otherwise land on internal teams.

- UI instability and workflow change: As interfaces evolve and user flows shift, off-the-shelf testing tools are expected to adapt without pulling engineering teams into constant rework.

- Framework churn and compatibility shifts: Changes across browsers, test frameworks, CI/CD pipelines, and supporting libraries are handled by the vendors rather than internal teams.

- Model evolution and infrastructure upkeep: As AI in software testing continues to change, vendors carry responsibility for updating models, managing infrastructure, and keeping systems reliable at scale.

When leaders evaluate off-the-shelf testing tools purely on capability, they often miss the important question: Where does the maintenance burden live over time?

The Internal Lock-In Risk Leaders Often Miss

Vendor lock-in is often cited as a reason to build internal tools. In practice, internal lock-in is often the larger risk.

- Staffing lock-in: Continuity depends on specific individuals who understand the system well enough to keep it running. When they move on, reliability becomes harder to sustain.

- Knowledge lock-in: Only a few people know how the system actually works. When others need to change it, progress slows, or mistakes creep in.

- Process rigidity: As internal tools grow, teams start working around them instead of through them. Over time, this limits how quickly changes can be made when delivery pressure increases.

And unlike vendor dependency, once internal lock-in sets in, it is difficult to reverse without disruption.

The Question Leaders Should Actually Ask

Strong build or buy decisions hold up because the hardest questions are answered before tools are chosen.

-

What business metric improves in the next 90 days?

If the impact cannot be seen in delivery speed, release confidence, or risk reduction, then the decision is likely premature. -

Which failure mode are we actively reducing?

Flakiness, maintenance load, delayed releases, or dependency on key individuals. If the risk is unclear, so is the decision. -

Who owns this if key people leave?

If ownership depends on specific individuals, it rarely scales, regardless of whether the system is built internally or bought. -

What does success look like when usage doubles?

Many approaches work well at a pilot level. But very few remain effective when they are adopted across teams and pipelines.

Any AI testing approach, internal or external, that cannot answer these questions clearly is unlikely to scale without friction.

Conclusion

Build or buy decisions in testing are rarely about tools. They are about who ends up carrying the ongoing maintenance and reliability work.

In many teams, that responsibility slowly drifts toward senior engineers, simply because nothing else was put in place.

At this stage, the more useful question is not which option is more advanced or more flexible. It is about where instability should live, who is accountable for it over time, and how easily that decision can be revisited as teams and products change.

For teams that are actively thinking about handling that work more deliberately, Webo.AI is focused on exactly that problem—delivering AI test reliability without the build-and-maintain burden.

See why buying beats building for most teams. Start a free trial with Webo.AI and get stable, scalable AI testing without owning the maintenance.

Ready to Choose Reliability Over DIY?

Get AI-powered test stability without the maintenance lock-in.

Webo.AI handles flakiness, self-healing, and scale so your team can focus on shipping—not sustaining custom tools.

FAQs

How do we know when test instability has become a build vs buy decision, not just a QA issue?

Most teams notice the shift when conversations stop being about fixing individual failures and start revolving around reliability as a whole. Discussions about building vs buying AI test automation tools begin resurfacing sprint after sprint, and senior engineers or managers are repeatedly pulled into triage, release calls, or post-mortems.

At that point, the issue is no longer coverage or speed. It is about who owns reliability as systems, interfaces, and delivery pipelines keep changing.

What are the biggest challenges of developing custom AI test tools?

The hardest part is not making them work once. It is keeping them reliable as products evolve. Teams run into issues adapting to UI changes, keeping pace with CI/CD updates, dealing with model drift, and maintaining continuity once the original builders move on.

Why do feature comparisons fail to capture the real risk in AI testing decisions?

Features describe what a tool can do under ideal conditions. But they say very little about how effort builds up over time, how ownership shifts as teams grow, or how resilient the system is when change is constant. Those are the factors that shape long-term impact.

How does AI change the way testing interacts with CI/CD pipelines?

AI testing integrates easily into CI/CD from a technical standpoint. The problem here is trust. When results are stable, pipelines move quickly, but when confidence drops, pipelines slow regardless of automation depth. That’s why reliability, not integration, becomes the gating factor.

In those cases, platforms like webo.ai can help absorb ongoing test volatility, reducing how often CI/CD pipelines depend on senior-engineer intervention.